Running a static website in AWS for $0.55

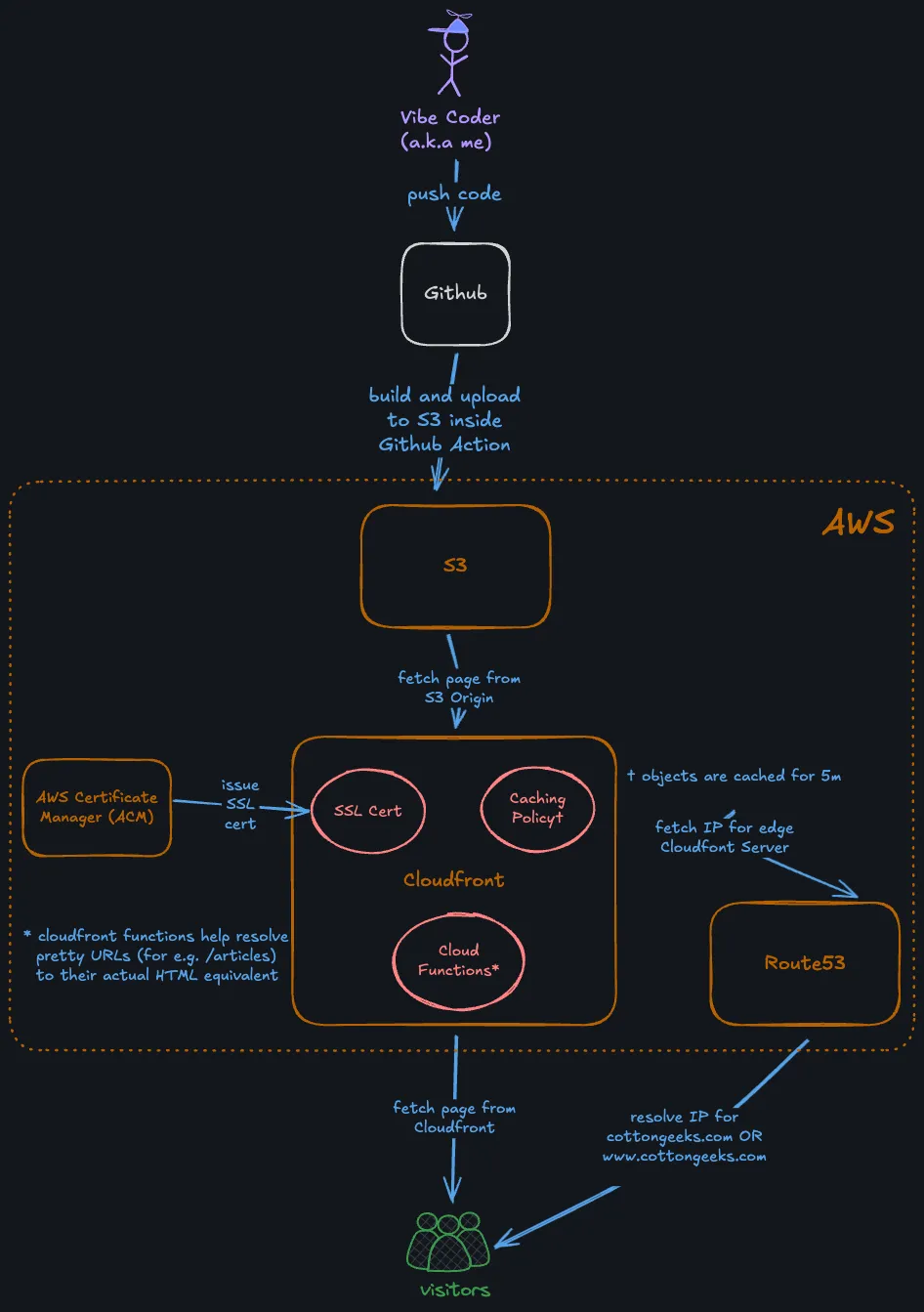

Here is an overview of all the major components involved in building and running this site:

Apart from the code which is hosted in Github, all of the other components are hosted in Amazon Web Services (AWS). I find it amazing that at the moment this costs me a mere 55¢ (yes that’s $0.55) to build and operate. Moreover, all of the $0.55 goes towards the cost of a Route53 hosted zone that supports DNS routing for the site domain cottongeeks.com.

There are plenty of good platforms like Netlify, Cloudflare, Vercel etc which allow free hosting of static websites with a custom domain. But I chose AWS because I already had an account with them, and the DIY nature of the process and the level of customizability appealed to me.

By composing a few services, we’ll get a static site with some pretty amazing features:

- Approximately unlimited scalability.

- As close to an operations-free experience as you’re ever likely to find.

- Full HTTPS support with a certificate that’s renewed automatically.

- A CDN with dozens of edge locations around the globe so that it’ll load quickly from anywhere.

- A GitHub-based workflow which will make publishing new content as easy as merging a pull request.

I will go through all the components and how they are configured below. It may be helpful if you are trying to roll your own static site, and looking for options to host it.

Creating and building the site with Astro¶

The site is generated using Astro, which apparently is the new Wordpress according to some folks. I chose to use Astro mainly because I wanted the ability to embed interactive React components in the Markdown formatted articles, using MDX. Astro also had the right mix of customizability and inbuilt features, so as to not feel too heavy handed or prescriptive. It also had excellent documentation.

I was able to write Typescript code to customize many aspects of the site generation process, for e.g custom URL slugs, clipboard copy for anchor links etc. I plan to customize the site even more in the future, and I feel pretty confident Astro won’t get in the way of that.

The build for the site happens inside a Github action

- name: Checkout repository

uses: actions/checkout@v2

- name: Setup Node.js

uses: actions/setup-node@v4

with:

node-version: '23'

- name: Install dependencies

run: npm install

- name: Build Vite project

run: npm run buildAstro is not a requirement for this kind of setup. Any framework that builds static files should be fine. My personal site hosted on the same setup uses a custom static site builder written in Go.

Uploading to S3¶

This is fairly simple with the AWS CLI inside of the same Github Action that builds the site above

- name: Configure AWS credentials

uses: aws-actions/configure-aws-credentials@v4

with:

aws-access-key-id: ${{ secrets.AWS_ACCESS_KEY_ID }}

aws-secret-access-key: ${{ secrets.AWS_SECRET_ACCESS_KEY }}

aws-region: us-east-2

- name: Upload to S3

run: aws s3 sync dist/ s3://cottongeeks-site --deleteSSL certificate with AWS Certificate Manager¶

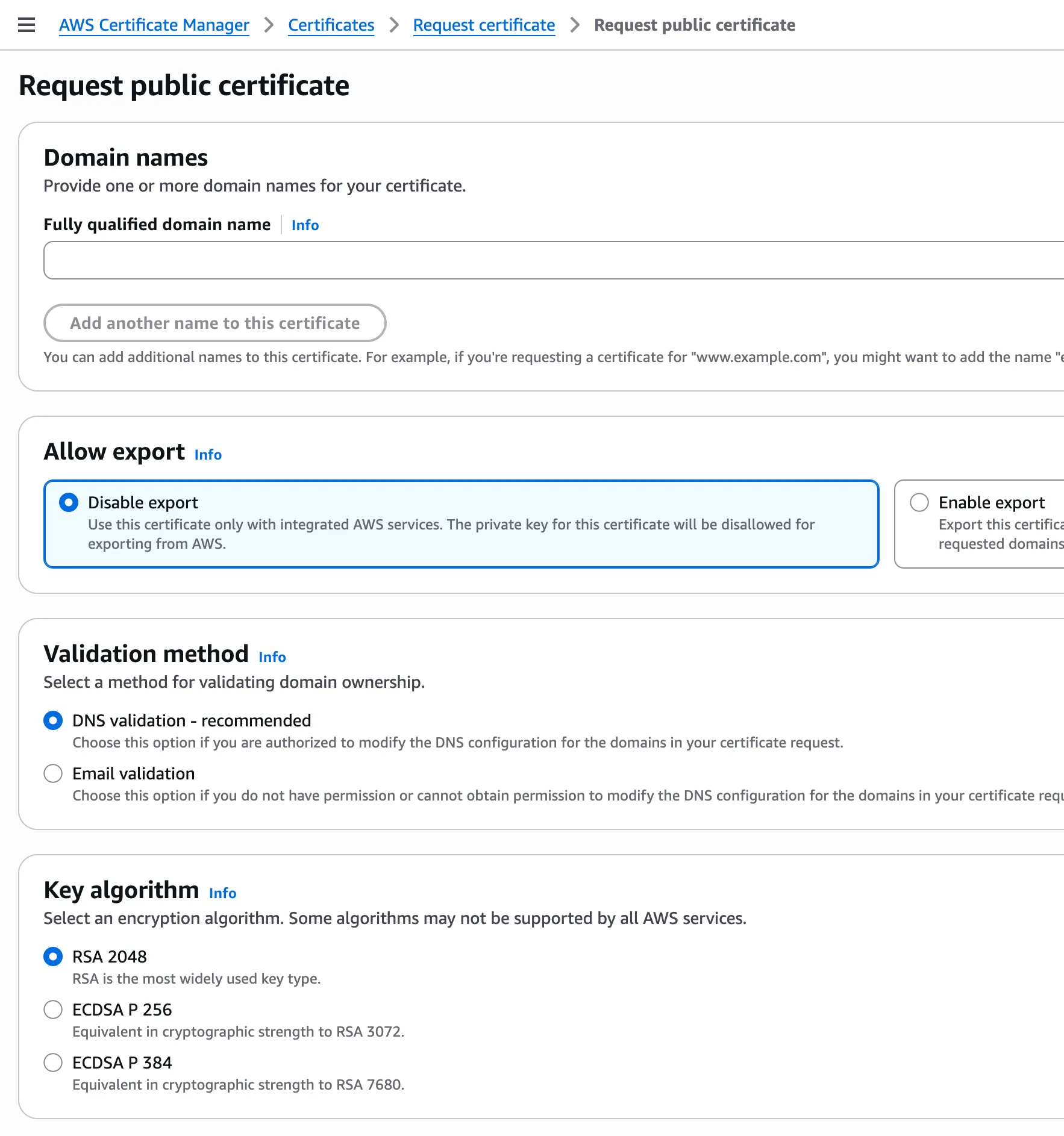

I used AWS Certificate Manager to provision a free SSL/TLS certificate for use with AWS.

Once the certificate is provisioned, it can be used by Cloudfront to serve the site over HTTPS. There is another additional step to validate the domain via DNS validation, but that is mostly automated if you are managing the domain via Route53.

CDN with Cloudfront¶

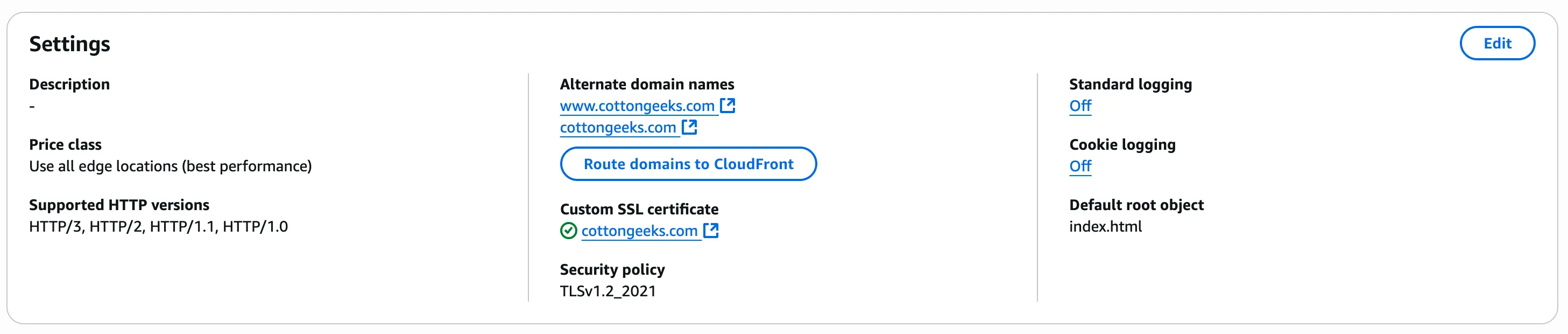

I created a pretty standard Cloudfront distribution, and chose to automatically redirect HTTP to HTTPS. I also attached the SSL certificate from the previous step.

Pretty URLs and Auto Redirections¶

I had some very specific and pedantic requirements for the structure of the URLs:

- I wanted requests made to cottongeeks.com to permanently redirect to

www.cottongeeks.combefore being served. That way there would only be one canonical hostname for the site. - I wanted clean URLs without HTML file extensions. So a request made to

/articleswould automatically look for/articles.htmlfrom the S3 origin. - I also wanted to redirect URLs with trailing slashes to their non-slash equivalent. For e.g.

/articles/would redirect to/articles, which would eventually serve/articles.htmlfrom the origin.

I used CloudFront Functions to do this. This is where choosing AWS turned out to be an advantage.

Here is the source:

var config = {

suffix: '.html'

};

var regexSuffixless = /\/[^/.]+$/; // e.g. "/some/page" but not "/", "/some/" or "/some.jpg"

var regexTrailingSlash = /.+\/$/; // e.g. "/some/" or "/some/page/" but not root "/"

function handler(event) {

var request = event.request;

// Redirect cottongeeks.com to www.cottongeeks.com

if (request.headers.host.value == 'cottongeeks.com') {

var response = {

statusCode: 301,

statusDescription: 'Moved Permanently',

headers: {

'location': {value: 'https://www.cottongeeks.com' + event.request.uri }

}

};

return response;

}

var uri = request.uri;

var suffix = config.suffix;

var appendToDirs = config.appendToDirs;

var removeTrailingSlash = config.removeTrailingSlash;

// Append ".html" to origin request if it doesnt have a suffix

if uri.match(regexSuffixless) {

request.uri = uri + suffix;

return request;

}

// Redirect (301) non-root requests ending in "/" to URI without trailing slash

if uri.match(/.+\/$/) {

response = {

statusCode: 301,

statusDescription: 'Moved Permanently',

headers: {

'location': { value: uri.slice(0, -1) }

}

};

return response;

}

return request;

}There are other (albeit more clunkier) ways to achieve this. For example my personal website uploads HTML files to S3 without an extension and then sets the mime-type to text/html so that they are served correctly to the browser by CloudFront. (see upload code)

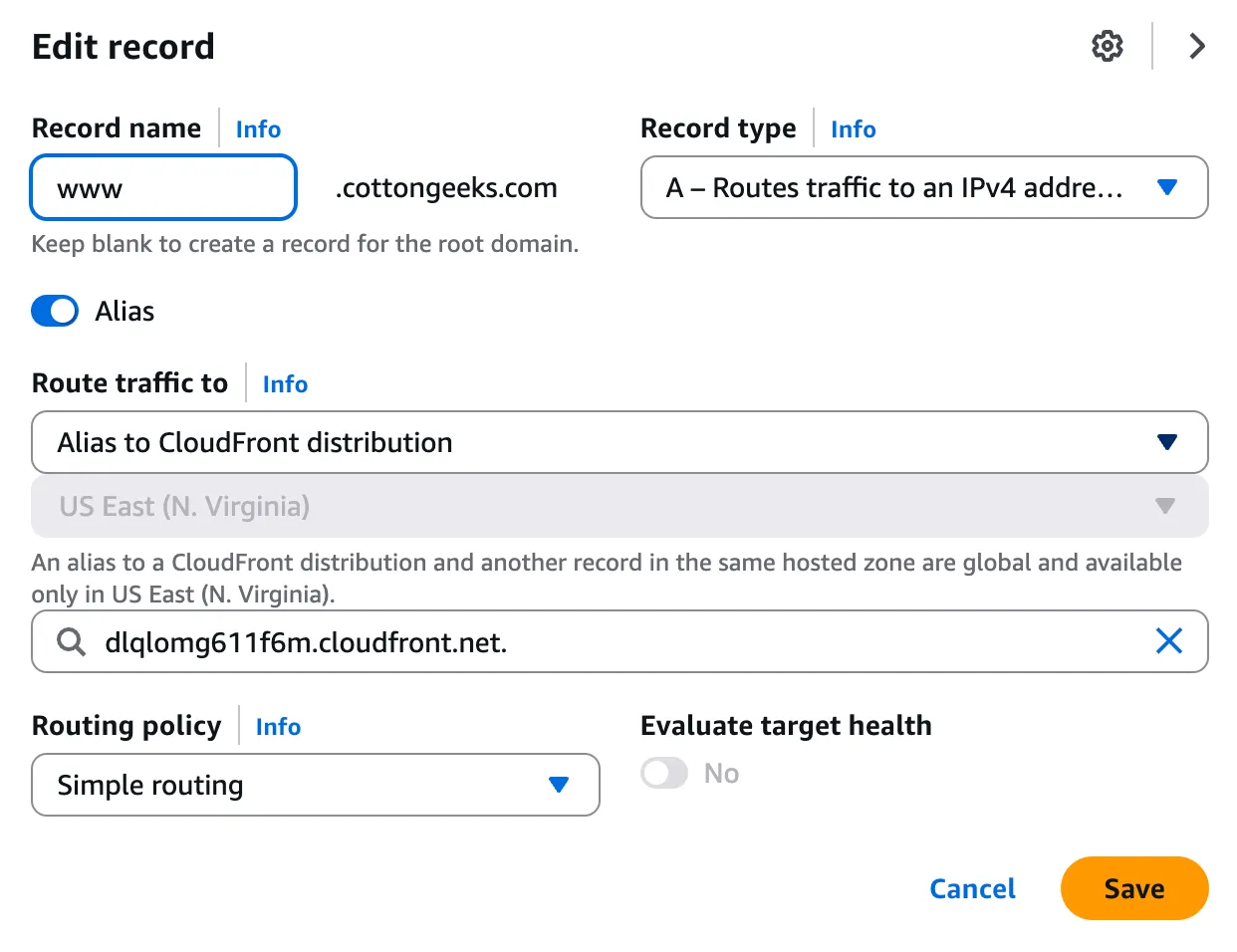

DNS Routing with Route53¶

I used a Route53 Hosted Zone to manage DNS records for the cottongeeks.com domain. Route53 allows A records to be aliased to a CloudFront distribution. I aliased both cottongeeks.com and www.cottongeeks.com to the same distribution we created above.

Testing¶

After configuring all this, I could finally verify that my website is served from the nearest of CloudFront POPs (Points of Presence)

curl https://www.cottongeeks.com

> GET / HTTP/2

> Host: www.cottongeeks.com

> User-Agent: curl/8.7.1

> Accept: */*

>

* Request completely sent off

< HTTP/2 200

< content-type: text/html

< content-length: 4836

< date: Tue, 24 Jun 2025 21:23:36 GMT

< last-modified: Tue, 24 Jun 2025 11:14:26 GMT

< etag: "5854048c759d2fa3e44e703d50adb122"

< x-amz-server-side-encryption: AES256

< accept-ranges: bytes

< server: AmazonS3

< x-cache: Hit from cloudfront

< via: 1.1 a49f020132dfafabe09a4d42b8bfbc4c.cloudfront.net (CloudFront)

< x-amz-cf-pop: BLR50-P4

< alt-svc: h3=":443"; ma=86400

< x-amz-cf-id: _bO6JBqQhGQe6m_XCavNTZEMhniXOsJyqPg3RDIWCvCFCgCNhcFUxQ==

< age: 12As we can see, it is being served on HTTP/2 from the BLR50-P4 POP, which I can only assume is located in Bengaluru (where I am at currently).